- This version

- http://www.multi-access.de/mint/irm/2012/20120822/

- Latest version

- http://www.multi-access.de/mint/irm/

- Previous version

- Editors

- Sebastian Feuerstack, UFSCar

This document is available under the W3C Document License. See the W3C Intellectual Rights Notice and Legal Disclaimers for additional information.

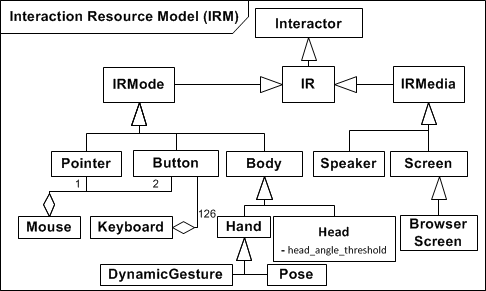

Interaction resource interactors (IR) specify characteristics of physical or virtual devices that are used to interact with a system. Physical

devices are for instance a mouse, a joystick, or a keyboard. Virtual devices describe software that controls hardware like a gesture or

body movement recognition driven by a webcam or a light or a speaker connected to a home automation system. Different to physical

devices for which the IR specification is fixed and often simple, virtual device IRs are more complex and often are

designed with a specific application in mind.

This document focuses on giving some examples for interaction resource interactors that can implement a certain mode or media.

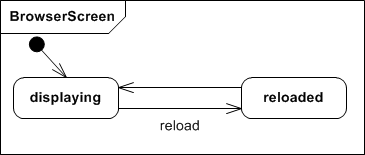

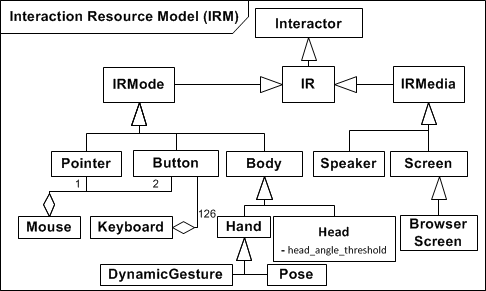

Figure 1. Overview

Figure 1 depicts the relations between some of the interaction resources (IRs) that we have specified so far. IRs are modeled as interactors.

The IRM distinguishes between mode and media IRs. The former represent multimodal control options of the user, the latter specify output-only media.

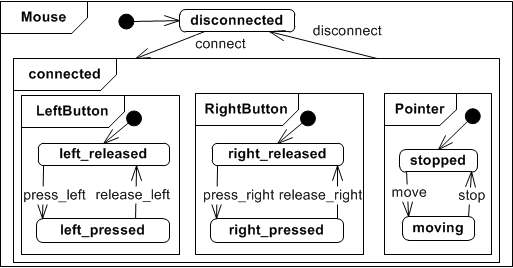

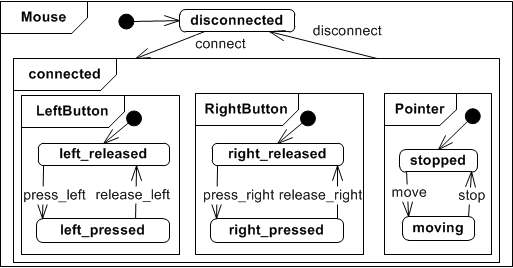

Figure 2. Mouse Interactor

Figure 2 depicts an aggregated IR representing a mouse. It is composed of a pointer and two buttons.

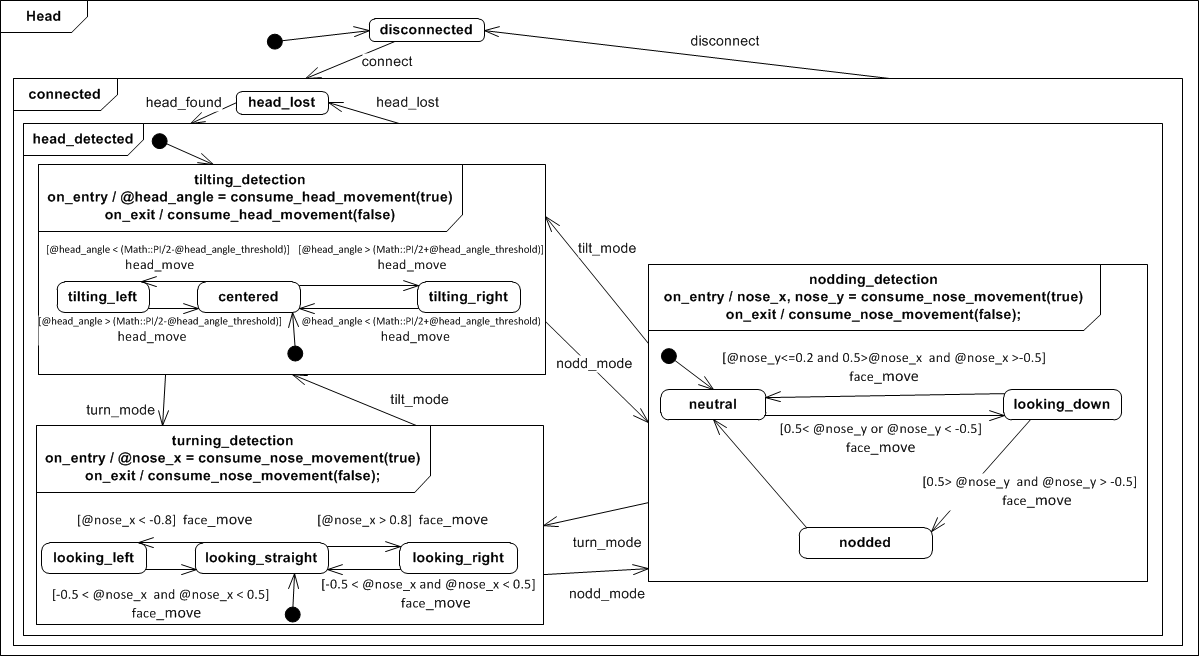

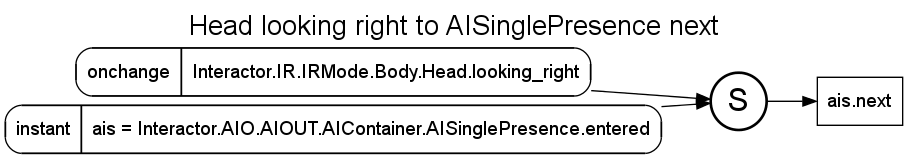

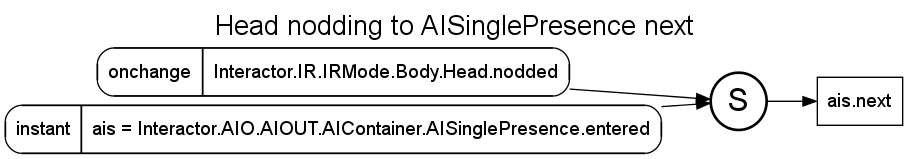

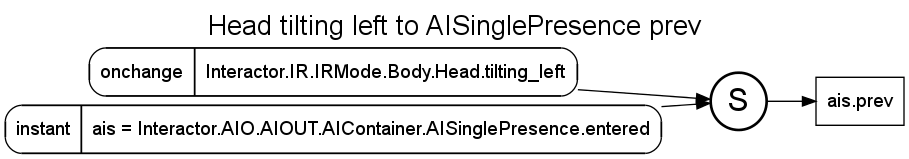

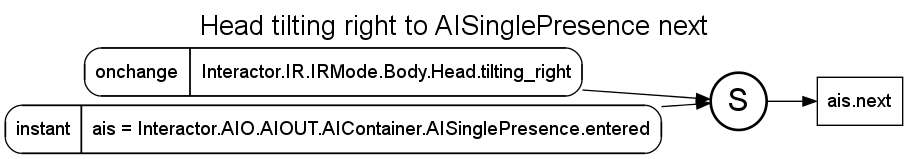

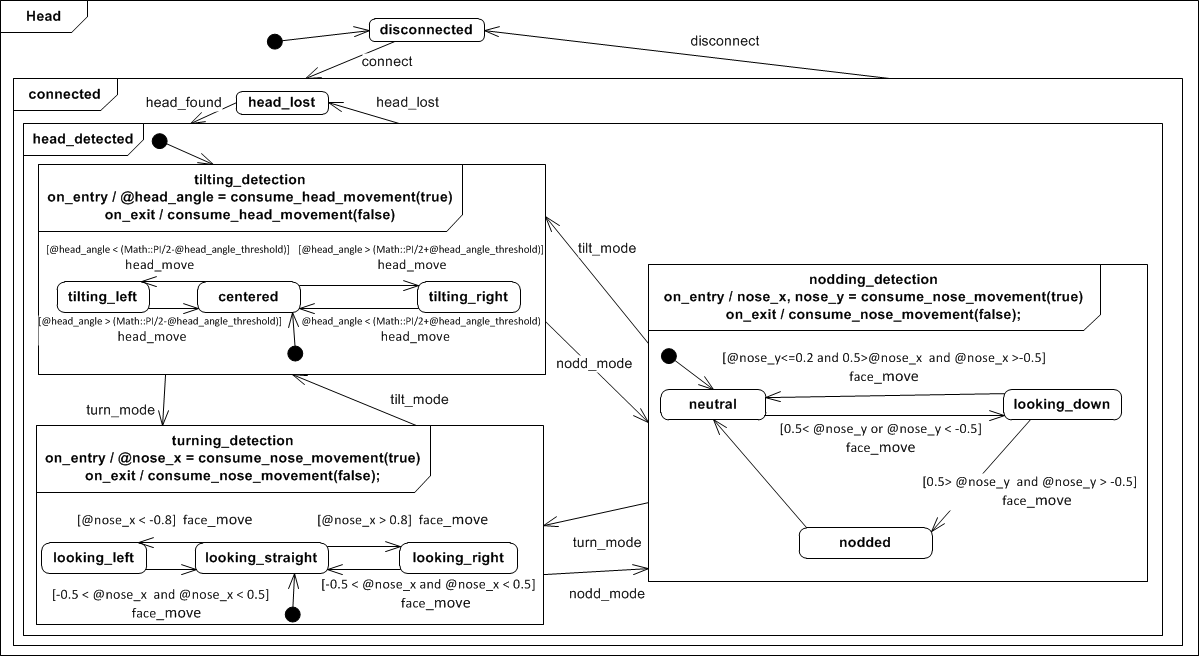

Figure 3. Head-based Interface Control

The head interactor can be used to twigger events based on head movements. So far, three different modes: tilting, turning and nodding have

been specified.

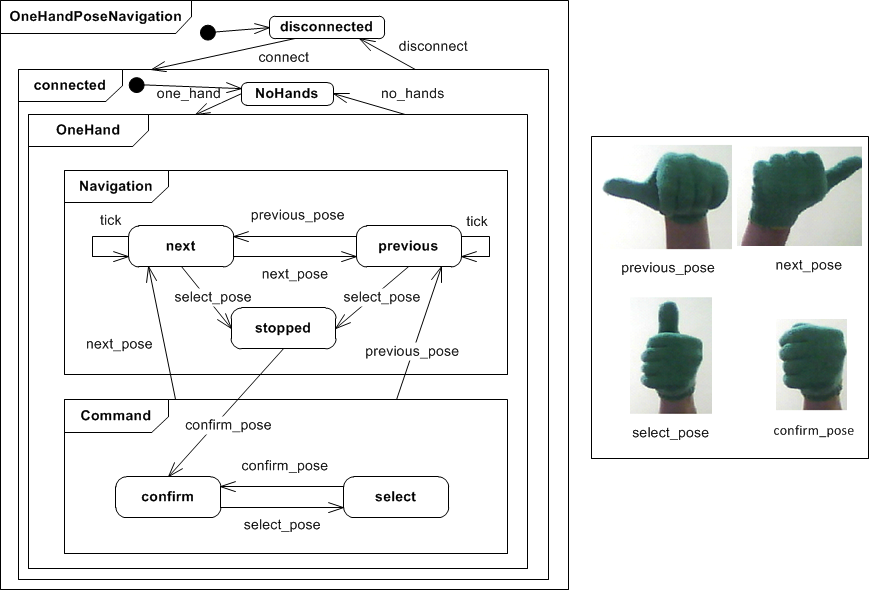

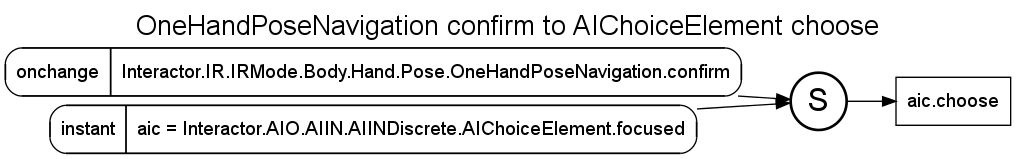

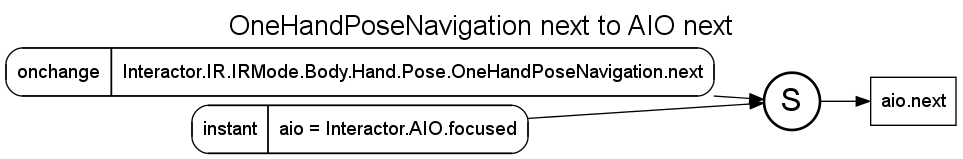

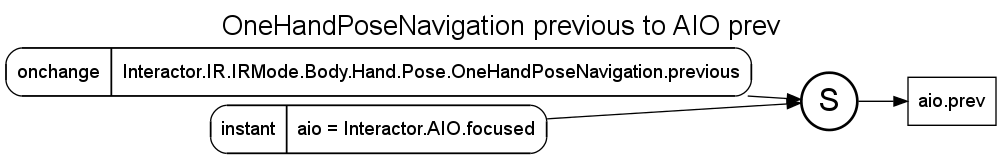

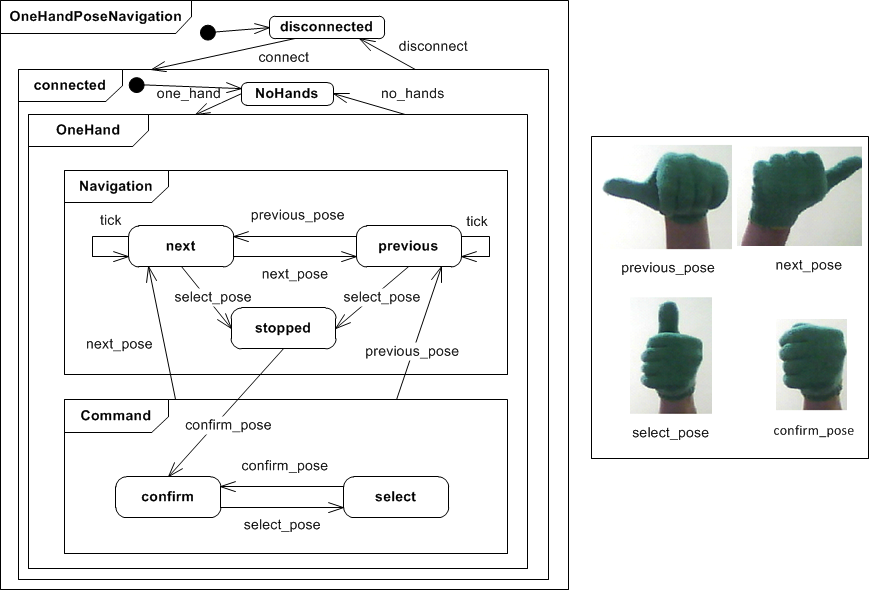

Figure 4. Posture-based Interface Navigation

Figure 4 shows a virtual IR that enables basic user interface navigation and selection of elements using the four different hand

postures and movements shown on the left of figure 4. Green colored gloves and a webcam are used to capture the different

The navigation works with only one hand. Next, there is a fixed default speed of one second per element specified. Therefore,

while the previous or next posture is shown, the focus in the interface moves to the previous or next element with each “tick”.

Finally, there is an element selection specified, that on the one hand stops the navigation as soon as the select posture

is shown and on the other hand requires a second posture to actually confirm the selection (e.g. for security reasons).

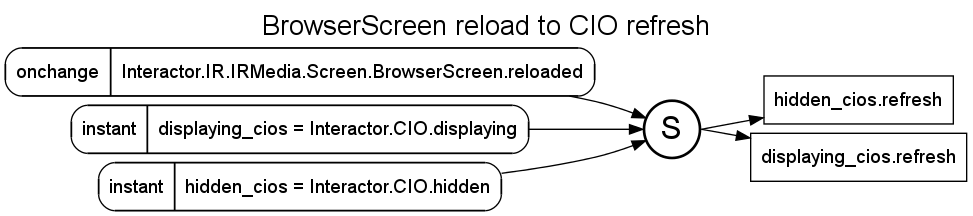

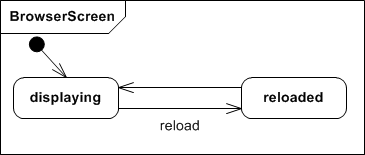

Figure 5. BrowserScreen Interactor

Figure 5 shows the BrowserScreen interactor that defines the control options and events of a web browser.

As of now we only support the refresh button that is used to re-display the interactors that are currently

in state displaying or hidden (hidden already includes pre-caching in the browser).

An open source project that implements tools and a platform to enable the design an execution of multimodal interfaces for the web can be found here: http://www.multi-access.de