Abstract Interactor Model Specification

W3C Working Group Submission August 21th 2012

- This version

- http://www.multi-access.de/mint/aim/2012/20120821/

- Latest version

- http://www.multi-access.de/mint/aim/

- Previous version

- http://www.multi-access.de/mint/aim/2012/20120516/

- Editors

- Sebastian Feuerstack, UFSCar

- Jessica Colnago, UFSCar

This document is available under the W3C Document License. See the W3C Intellectual Rights Notice and Legal Disclaimers for additional information.

Overview

Using interactor models to describe interactive systems is an already well known and matured approach and has been extensively discussed, for instance, in [1]. They can be thought as an architectural abstraction similar to objects in object-oriented programming [1]. Several definitions of the interactor term have been proposed so far like for instance the PISA [2] or the YORK [3] interactor. This specification uses an interactor as:

“ a component in the description of an interactive system that encapsulates a state, the events that manipulate the state and the means by which the state is made perceivable to the user of the system.” [4]

Different to these early approaches that focused on the design of graphical interfaces, this specification proposes interactors to assemble multimodal user interfaces. An interactor can receive input from the user and send output from the system to the user. Each one is specified by a state machine that describes the interactor’s behaviour. A data structure is used to store and manipulate information that is received by each interactor or sent to others.

UML class diagrams are used to specify the data structure. Each interactor is associated with at least one class that needs to inherit from an abstract “Interactor” class. This class contains the basic data structure to support interactor persistence such as storing the actual states of an interactor and encapsulates the functionality to load and execute the state machines.

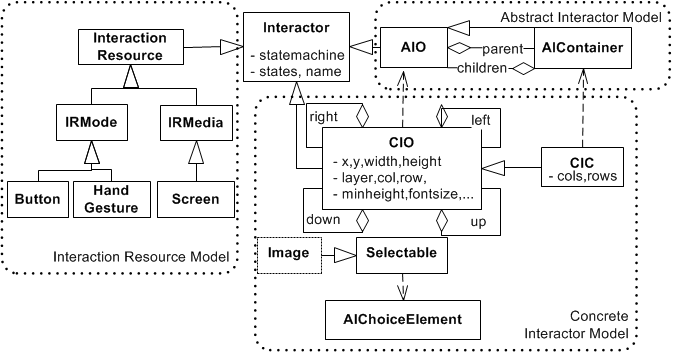

The following figure shows the interactor class and its relations of the three basic interactors: the Abstract Interactor Object (AIO), the graphical Concrete Interactor Object (CIO), and Interaction Resource (IR) interactors, which describe the interaction capabilities of physical devices. The concrete and the interaction resource interaction specification is not part of this document but uses the same kind of modeling techniques (state charts and class diagrams) and has been described earlier [5,6,7].

The Concrete User Interface (CUI) model is used to design a user interface for a certain mode or media. The Abstract User Interface (AUI) model describes the part of the interface that contains the behaviour and data specification shared among all modes or media. Interactors share the same database (e.g. a tuplespace) to store and manage their data. Each interactor (that e.g. represents a button on a concrete graphical interface) is initiated several times to reflect all occurrences of e.g. a button in an application. This interactor is further on initiated at the AUI model level, and additionally for each supported modality at the CUI level. Additionally, at system run-time, interaction resource (IR) interactors are instantiated once for each device that is connected to the system. IRs are, for instance, a gesture recognition that supports a certain set of gestures or postures or a WII remote control that supports a set of pre-defined gestures pointing to objects, and additionally has a joypad control.

Abstract Interactor Model Structure

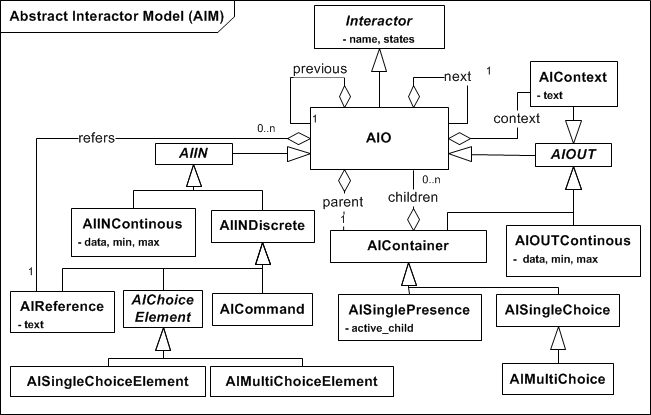

A multimodal setup combines at least one media with several modes. Thus, parts of the interface interactors are output-related (e.g. sound or a graphical interface for instance), whereas others serve to address the modes used by the user to control the interface (like a joystick, speech, or a mouse, for instance). At the abstract, mode and media independent model level this has two implications: a) input needs to be separated from output; and b) a continuous input (or output), such as moving the mouse or a displaying a graph, needs to be distinguished from a discrete one. The following two sections describe how these implications are addressed in an abstract user interactor model (AIM). First, by organizing all AIM interactors based on these findings in a static structure (a class diagram) and second, by specifying their behavior (by state charts).

The AIO is the parent interactor of all other interactors of the AIM and is derived from the core Interactor, which is the base interactor of all elements from all interface models.

The AIM distinguishes between an abstract interactor input (AIIN) and output (AIOUT) elements, which could be either of a continuous or a discrete nature. Continuous outputs are a graphical progress bar, a chart, a tone that changes its pitch, or a light, which can be dimmed, for instance. Discrete output could be implemented in different ways, such as by written text, by sound or by a spoken note.

Grouping of interactors is handled by Abstract Interactor Containers (AIContainer). AIContainers can be specialized to realize single (AISingleChoice) or multiple choices (AIMultiChoice) and are derived from the discrete output interactor. A discrete output interactor can be explicitly associated with every AIO, which we call AIContext. The AIContext is used to add contextual information that helps the user to control the interface or to understand the interface interactor. This could be, for instance, a tooltip, a picture or a sound file.

User input could be performed in a continuous manner as well. Some examples are: moving a slider or showing a distance with two hands. Discrete user inputs (AIINDiscrete) could be performed by commands that are issued by voice, or by pressing a physical or virtual button, for instance. We consider choosing one or several things from a list as user input as well (AISingleChoose and AIMultiChoose respectively).

In order to support distributing input and output to different devices, we strictly separate user input from output in the user interface models. There are some implications of this strict separation: For instance user choices are required to be separated to two different elements: a list of elements to choose from is considered as output (since it just signalizes the information that a user can choose together with a grouping of interactors), the current interactors to choose from are modeled separately as AIIN (by AISingleChoiceElement and AIMultiChoiceElement).

AIO - Abstract Interactor Object

An Abstract Interactor Object (AIO) is the abstract basic entity of all other elements of the AUI specification. Like all other elements of the AUI description it does not implement a representation in the user interfaces (which is done in the CUI description) but specifies common behavior that is required to be implemented for all multimodal setups (combinations of IR).

| SCXML | Since | Updated |

|---|---|---|

| AIO.scxml | 20111201 | 20120821 |

Lifecycle

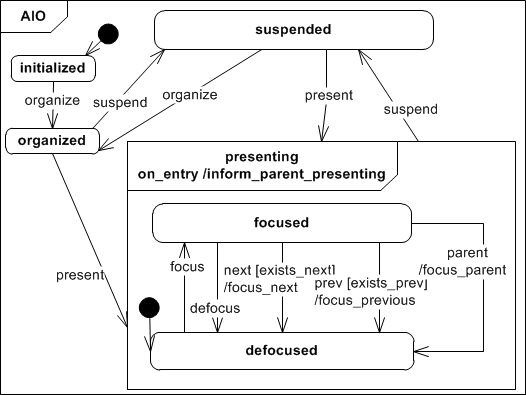

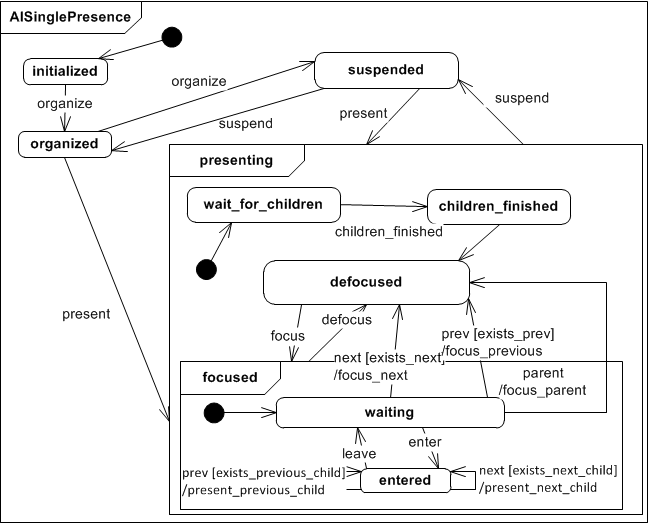

After an AIO has been initialized it can be presenting, after it has been successfully organized. To calculate the AIO organisation, a basic navigation scheme is established for all AIOs that are presented together on a certain time in a user interface dialog. An AIO that is presenting can be removed from the user interface by suspending it to ''suspended'. Therafter it can be presenting or organized again, if the navigation scheme needs to be updated.

An AIO offers basic navigation capabilities to reach the previous (prev) and the next (next) AIO element of a user interface. By navigating forward and backward through the AIOs the focus that states the actual focus of attention of the user regarding the actual user interface representation is moved. A focus can be set by the focus event and removed by the defocus event. A general an arbitrary set of AIO can be focused (e.g. for multi-touch and collaborative, multi-user interfaces). By navigation the focus of one AIO can be changed at a time. Like it is specified by the behavior chart. If the user issues the next event, the next element gets focused whereas the previous one is defocused. The same mechanism is applied in the opposite direction (prev event).

AIIN - Abstract Interactor In

The Abstract Interactor In (AIIN) describes all elements of the AUI that can be used to input data to the computer. It derives the behavior and structure from the AIO class. It is used to describe mappings between input IRs and the appropriate abstract interactors (that needs to derive from this interactor).

| SCXML | Since | Updated |

|---|---|---|

| abstract | 20111201 | - |

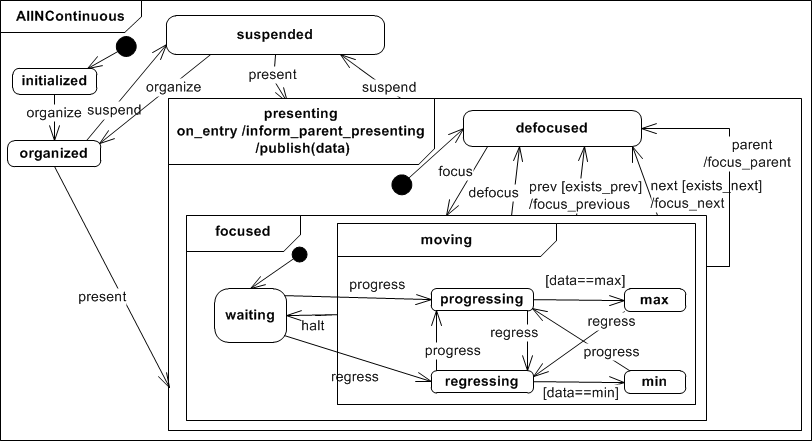

AIINContinuous - Abstract Interactor In Continuous

The Abstract Interactor In Continuous sums up all continuous input elements, like graphical sliders and scroll bars, physical knobs or gestures that represent distances. If the interactor is in state presenting it is initally set to waiting and it's kept that way until it receives a progress or regress event. When that happens it goes to state progressing or regressing respectively. It returns to waiting as soon as it receives a halt event. The AIINContinuous interactor class can store a minimum min and maximum max value for data. While regressing or progressing the produced data is checked for these boundaries and the inetractor changes to min or max in case a boundary is met.

While the interactor is in the moving state it continuously publishes it's progressing or regressing data to a a channel to that other interactor can subscribe to.

| SCXML | Since | Updated |

|---|---|---|

| AIINContinuous.scxml | 20111201 | 20120821 |

AIINDiscrete - Abstract Interactor In Discrete

The Abstract Interaction In Discrete sums up all discrete input elements.

| SCXML | Since | Updated |

|---|---|---|

| abstract | 20111201 | - |

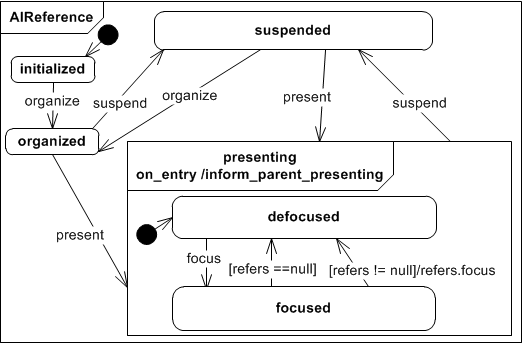

AIReference - Abstract Interactor Reference

With the Abstract Interactor Reference that can be associated to every AIO the user can refer to the targeted AIO. This might be a label or a hot-key in a graphical user interface, a voice menu command or a visual tag for instance. If there should be more than one way to address a certain AIO (that should exist in every modality!), more than one AIReference can be associated to one AIO.

While the AIReference interactor is in state presenting and recieves a focus event, it looks up it's targeted AIO interactor and forwards the focus to it. In case the AIReference has no target it looses the focus instantely.

| SCXML | Since | Updated |

|---|---|---|

| AIReference.scxml | 20111201 | 20120821 |

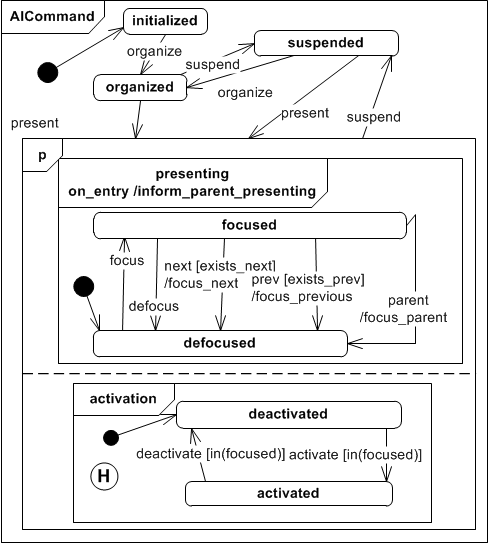

AICommand - Abstract Interactor Command

If the Abstract Interactor Command (AICommand) receives a present event, it enables to activate a command, which could end up using a mapping as a backend funcion call or a manipulation of another interactor for instance. The AICommand is initially deactivated and gets activated only if it is in state focus as well. The command activation state is persistent even if the interactor gets suspended. An AICommand is derived from the AIIN class since it describes an input that can only be triggered by the user.

| SCXML | Since | Updated |

|---|---|---|

| aicommand.scxml | 20111201 | 20120821 |

AIChoiceElement - Abstract Interactor Choice Element

The Abstract Interactor Choice Element (AIChoiceElement) extends the AIO presenting state to allow elements to be dragged and dropped.

| SCXML | Since | Updated |

|---|---|---|

| abstract | 20111201 | - |

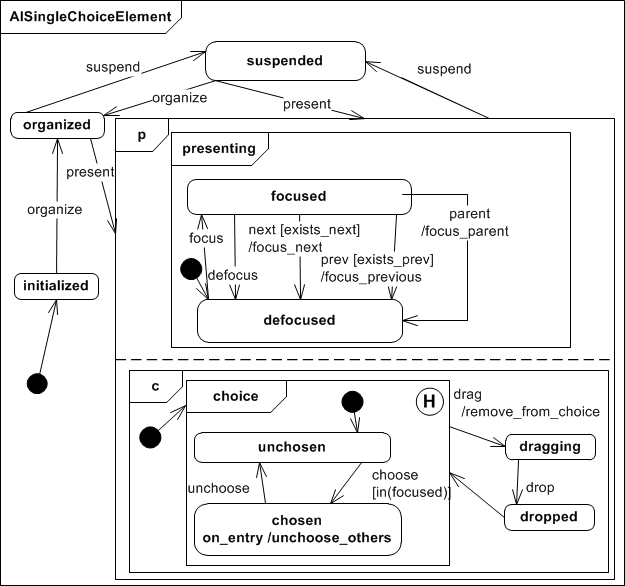

AISingleChoiceElement - Abstract Interactor Single Choice Element

The Abstract Interactor Single Choice Element extends the AIChoiceElement presenting state in the sense that it adds a choice run in parallel with the presenting super state. A AISIngleChoiceElement is initially listed and if it recieves the choose event (and it's focused) all other elements get unchosen. This ensures that only one element is chosen at a time. The choice state is stored persistently even if the interactor gets suspended.

While in presenting it could be draged and droped to another choice interactor.

| SCXML | Since | Updated |

|---|---|---|

| AISingleChoiceElement.scxml | 20111201 | 20120821 |

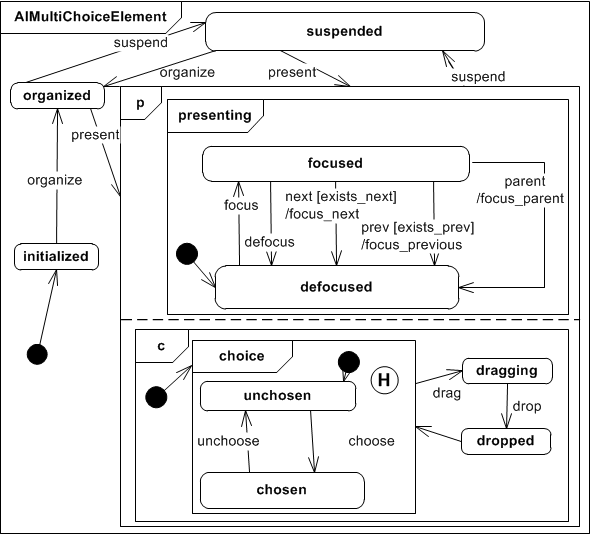

AIMultiChoiceElement - Abstract Interactor Multi Choice Element

Works like the AISingleChoiceElement except that it doesn't unchoose all other elements.

| SCXML | Since | Updated |

|---|---|---|

| AIMultiChoiceElement.scxml | 20111201 | 20120821 |

AIOUT - Abstract Interactor Out

The Abstract Interaction Out describes all abstract interactors that can be used to output information to the user. It derives structure and behavior from the AIO interactor description and is used to couple output interaction resources to abstract interators.

| SCXML | Since | Updated |

|---|---|---|

| abstract | 20111201 | - |

AIOUTDiscrete - Abstract Interactor Out Discrete

The Abstract Interactor Out Discrete describes all discrete outputs which includes lists of elements presented to the user.

| SCXML | Since | Updated |

|---|---|---|

| abstract | 20111201 | - |

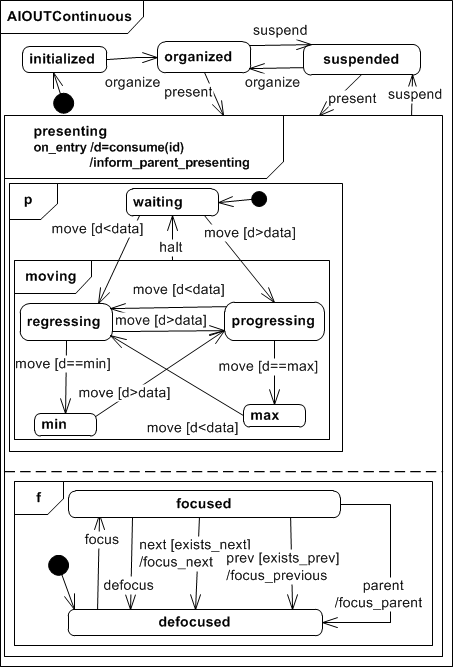

AIOUTContinuous - Abstract Interactor Out Continuous

The Abstract Interactor Out Continuous sums up all continuous output interactors, like graphical progress bars, a dimmable dight, or changes of the tone pitch for sound output for instance. If the interactor is in state presenting it subscribes itself to a channel id in order to consume streaming data d and sets itserlf to waiting. When new data d is received it checks if the data dd is greater or lesser than previously reveived data and changes it's state to progressing or regressing respectively.

It returns to waiting when it receives the halt event. Further on, a minimum min and maximum max limit could be set, which results on state changes to min or max respectively if the limit has been reached.

| SCXML | Since | Updated |

|---|---|---|

| AIOUTContinuous.scxml | 20120203 | 20120821 |

AIContext - Abstract Interactor Context

The Abstract Interactor Context offers contextual information for the user to improve the understanding of an AIO's interaction capabilities. This might be for instance a helping text, a picture or video. An AIO context object can be associated to every AIO. Thus, for a grouping AIContainer it might be used to explain the user what a certain user interface part is designed to be used for.

| SCXML | Since | Updated |

|---|---|---|

| AIContext.scxml | 20120203 | 20120821 |

AIContainer - Abstract Interactor Container

The Abstract Interactor Container (AIContainer) allows grouping of AIOs. Each AIContainer must have one parental AIContainer (with the exception of the root AIContainer) and stores references to all AIOs that it contains. The grouping of AIOs is considered an output mode. The AIContainer details the navigation options of the AIO's presenting state. Besides next,prev, focus, and defocus events the AIContainer supports navigation to parental AIContainers as well as setting a focus to the first child AIO by the child event.

| SCXML | Since | Updated |

|---|---|---|

| AIContainer.scxml | 20120203 | 20120821 |

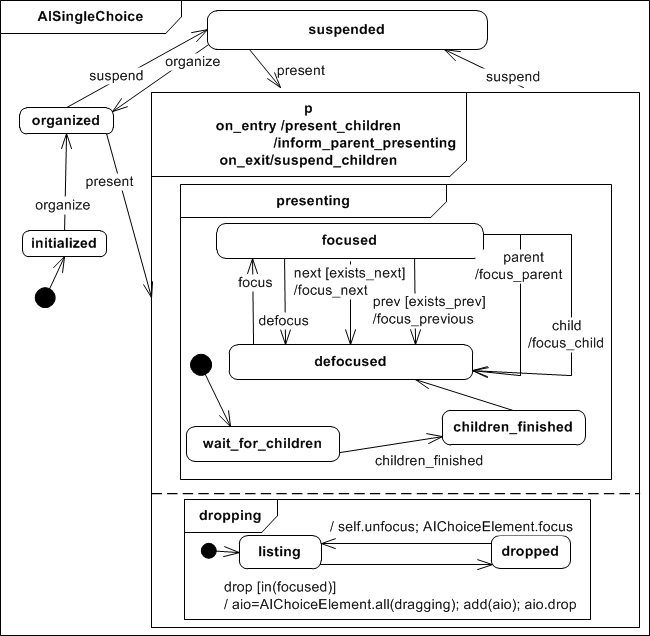

AISingleChoice - Abstract Interactor Single Choice

The Abstract Interactor Single Choice (AISingleChoice) specifies a container from that the user can choose only one element at a time. The container is initially set as listing and gets dropped when receives the drop event (when this happens all elements in dragging get droped). After that it unfocus itself and focus the AIChoiceElement.

| SCXML | Since | Updated |

|---|---|---|

| AISingleChoice.scxml | 20111201 | 20120821 |

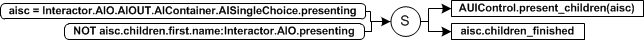

If the interactor is presented after being organized (or hidden) it waits for the all children being presented first. This is performed by a mapping between the AISingleChoice and its children that triggers the children_finished event upon success.

| Mapping | Since | Updated |

|---|---|---|

| aisinglechoice_present_to_child_present.xml | 20120821 | - |

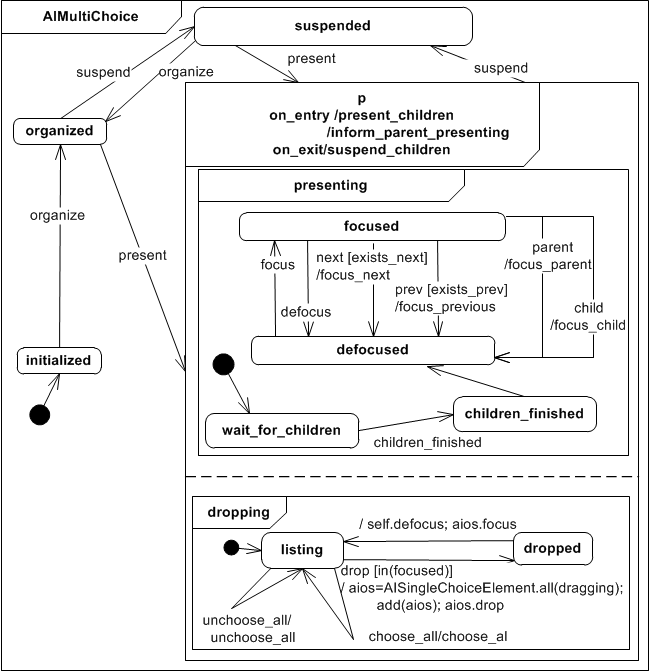

AIMultiChoice - Abstract Interactor Multi Choice

The Abstract Interactor Multi Choice specifies a container from that the user can choose an arbitrary amount of elements. It works similarly to AISingleChoice except that it has the option to choose_all and unchoose_all children.

| SCXML | Since | Updated |

|---|---|---|

| AIMultiChoice.scxml | 20111201 | 20120821 |

AISinglePresence - Abstract Interactor Single Presence

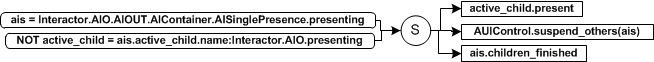

The Abstract Interactor Single Presence (AISinglePresence) extends the AIContainer. Different to the AIContainer that presents all its children interactors at the same time, the AISinglePresence only presents one of it's children at a time to the user. When entering state presenting the interactor checks whether or not there is an active child and if not the first child gets presented (present_first_child). The interactor is initially defocused, so that to be available for use it must be the currently focused object. When focused, it stays on state waiting until it receives the enter event. While on state waiting the interactor can receive a next or prev (previous) or parent event for interface navigation. When the interactor is entered the navigation events next and prev are used to navigate within the interactor, between it's children. A suspend event suspends all children of the interactor (suspend_children) and saves the currently active child so that it can be recovered if the same AISinglePresence interactor is presented again.

| SCXML | Since | Updated |

|---|---|---|

| AISinglePresence.scxml | 20111201 | 20120821 |

If the interactor is presented after being organized (or hidden) it waits for the active_child being presented and all the others being suspended. These actions are performed by a mapping between the AISinglePresence and its children that triggers the children_finished event upon success.

| Mapping | Since | Updated |

|---|---|---|

| aisinglepresence_present_to_child_present.xml | 20120821 | - |

Reference Implementation

An open source project that implements tools and a platform to enable the design an execution of multimodal interfaces for the web can be found here: http://www.multi-access.de

History

Changes for August 21th, 2012 version

- This specification version reflect the reference implementation MINT Platform 2012, earlier versions match MINT Platform 2010.

- The paper Engineering Device-spanning, Multimodal Web Applications using a Model-based Design Approach contains a comprehensive description of the MINT 2012 platform version.

- Removed synchronization statements in on_entry for all AIM interactors to remove dependency from AIM to CIM models. Instead, the MINT 2012 platform performs synchronizations using mappings as well. All basic mappings between AIM and CIM will be published in a separate CIM example document later on.

- Consistent use of inform_parent_presenting" for all interactors. This method can be used to inform the parent'' of a presentation event.

- Navigation with previous, next, and parent for AIOUTContinuous and AIINContinuous interactors.

- changed state name listed to unchosen in AISingleChoiceElement and AIMultiChoiceElement.

- added a mapping to ensure that the interactor waits till its children are presented for AISingleChoice, AIMultiChoice, and AISinglePresence.

- removed label attribute from AIO. Instead an AIReference has to be used to implement a label. There the relevant attribute is called text.

- renamed attribut description of AIContext to text.

- TheAIOUTContinuous interactor now consistently uses the conditional move event for performin state changes in moving;

References

[1] Panos Markopoulos. A compositional model for the formal specification of user interface software. PhD thesis, Queen Mary and Westfield College, University of London., 1997.

[2] F. Paterno. A theory of user-interaction objects. Journal of Visual Languages & Computing, 5(3):227 – 249, 1994.

[3] D.Duke, G.Faconti, M.Harrison, and F.Paternó. Unifying views of interactors. In AVI ’94: Proceedings of the Workshop on Advanced Visual Interfaces, pages 143–152, New York, NY, USA, 1994. ACM, ISBN:0-89791-733-2.

[4] D.J. Duke and M.D. Harrison. Abstract interaction objects. Computer Graphics Forum, 12(3):25–36, 1993.

[5] Sebastian Feuerstack, Mauro Dos Santos Anjo, Jessica Colnago und Ednaldo Pizzolato; Modeling of User Interfaces with State-Charts to Accelerate Test and Evaluation of different Gesture-based Multimodal Interactions. Workshop “Modellbasierte Entwicklung von Benutzungsschnittstellen (MoBe2011)”, Informatik 2011, 4-7. October 2011, Berlin, Germany

[6] Sebastian Feuerstack, Ednaldo Pizzolato; Building Multimodal Interfaces out of Executable, Model-based Interactors and Mappings; HCI International 2011; 14th International Conference on Human-Computer Interaction; J.A. Jacko (Ed.): Human-Computer Interaction, Part I, HCII 2011, LNCS 6761, pp. 221—228. Springer, Heidelberg (2011), 9-14 July 2011, Hilton Orlando Bonnet Creek, Orlando, Florida, USA.

[7] Sebastian Feuerstack, Allan Oliveira, Regina Araujo;Model-based Design of Interactions that can bridge Realities – The Augmented Drag-and-Drop ;13th Symposium on Virtual and Augmented Reality (SVR 2011), ISSN 2177-676, 23th-26th May 2011, Uberlândia, Minas Gerais, Brazil